The theoretical peak performance of the Tensor Cores on the V100 is approximately 120 TFLOPS. The reason half precision is so attractive is that the V100 GPU has 640 Tensor Cores, so they can all be performing 4x4 multiplications all at the same time. For more information, see the NVIDIA cuDNN Developer Guide. cuDNN v7 and cuBLAS 9 include some functions that invoke Tensor Core operations, for performance reasons these require that input and output feature map sizes are multiples of 8. In practice, higher performance is achieved when A and B dimensions are multiples of 8. In other words, Tensor Core math can accumulate half precision products into either single or half precision outputs. A and B are half precision 4x4 matrices, whereas D and C can be either half or single precision 4x4 matrices. Each Tensor Core performs D = A x B + C, where A, B, C, and D are matrices. The Volta generation of GPUs introduces Tensor Cores, which provide 8x more throughput than single precision math pipelines. For comparison, single precision dynamic range including denormals is 264 powers of 2. Half precision dynamic range, including denormals, is 40 powers of 2.

Exponent k in range results in (25 - k) bits of significand precision. Half precision format leads to the following dynamic range and precision:Ģ -24 to 2 -15, significand bits decrease as the exponent gets smaller. An implicit lead bit 1 is assumed for normalized values, just like in other IEEE floating point formats. IEEE 754 standard defines the following 16-bit half-precision floating point format: 1 sign bit, 5 exponent bits, and 10 fractional bits.Įxponent is encoded with 15 as the bias, resulting in exponent range (two exponent values, 0 and 31, are reserved for special values). This technique is called mixed-precision training since it uses both single and half-precision representations. Since DNN training has traditionally relied on IEEE single-precision format, this guide will focus on how to train with half precision while maintaining the network accuracy achieved with single precision (as Figure 1). Mixed precision without loss scaling (grey) diverges after a while, whereas mixed precision with loss scaling (green) matches the single precision model (black). Training curves for the bigLSTM English language model shows the benefits of the mixed-precision training techniques.

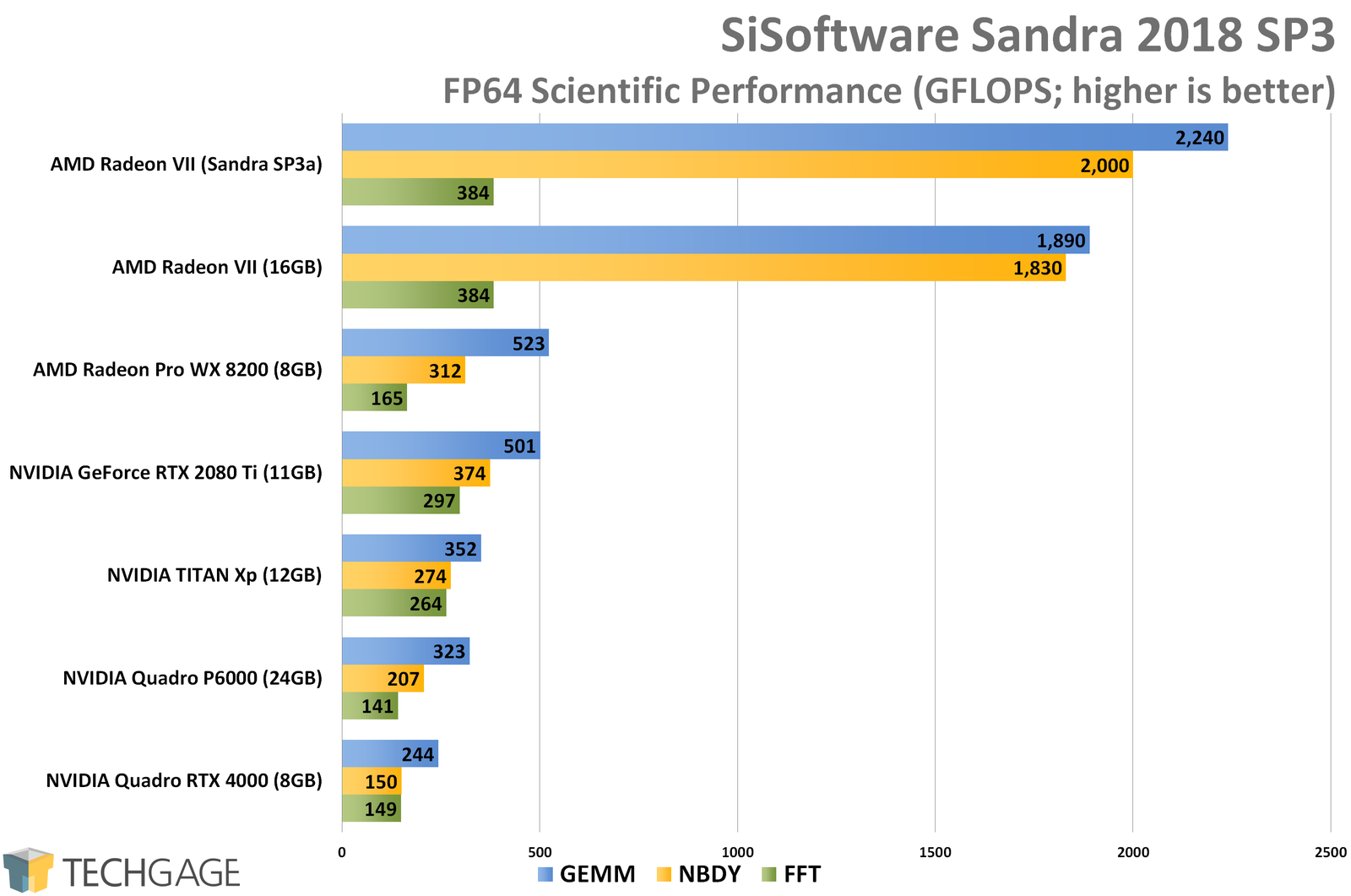

NVIDIA GPUs offer up to 8x more half precision arithmetic throughput when compared to single-precision, thus speeding up math-limited layers.įigure 1. Half-precision halves the number of bytes accessed, thus reducing the time spent in memory-limited layers. Lowering the required memory enables training of larger models or training with larger mini-batches.Įxecution time can be sensitive to memory or arithmetic bandwidth. Half-precision floating point format (FP16) uses 16 bits, compared to 32 bits for single precision (FP32). One way to lower the required resources is to use lower-precision arithmetic, which has the following benefits. Single precision (also known as 32-bit) is a common floating point format ( float in C-derived programming languages), and 64-bit, known as double precision ( double).ĭeep Neural Networks (DNNs) have led to breakthroughs in a number of areas, including:ĭNN complexity has been increasing to achieve these results, which in turn has increased the computational resources required to train these networks. Half precision (also known as FP16) data compared to higher precision FP32 vs FP64 reduces memory usage of the neural network, allowing training and deployment of larger networks, and FP16 data transfers take less time than FP32 or FP64 transfers. Mixed precision is the combined use of different numerical precisions in a computational method. The ability to train deep learning networks with lower precision was introduced in the Pascal architecture and first supported in CUDA 8 in the NVIDIA Deep Learning SDK. Adding loss scaling to preserve small gradient values.Porting the model to use the FP16 data type where appropriate.Using mixed precision training requires two steps: Since the introduction of Tensor Cores in the Volta and Turing architectures, significant training speedups are experienced by switching to mixed precision - up to 3x overall speedup on the most arithmetically intense model architectures. Mixed precision training offers significant computational speedup by performing operations in half-precision format, while storing minimal information in single-precision to retain as much information as possible in critical parts of the network.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed